I Fed Brewfather's API to Claude and Built My Own MCP Server

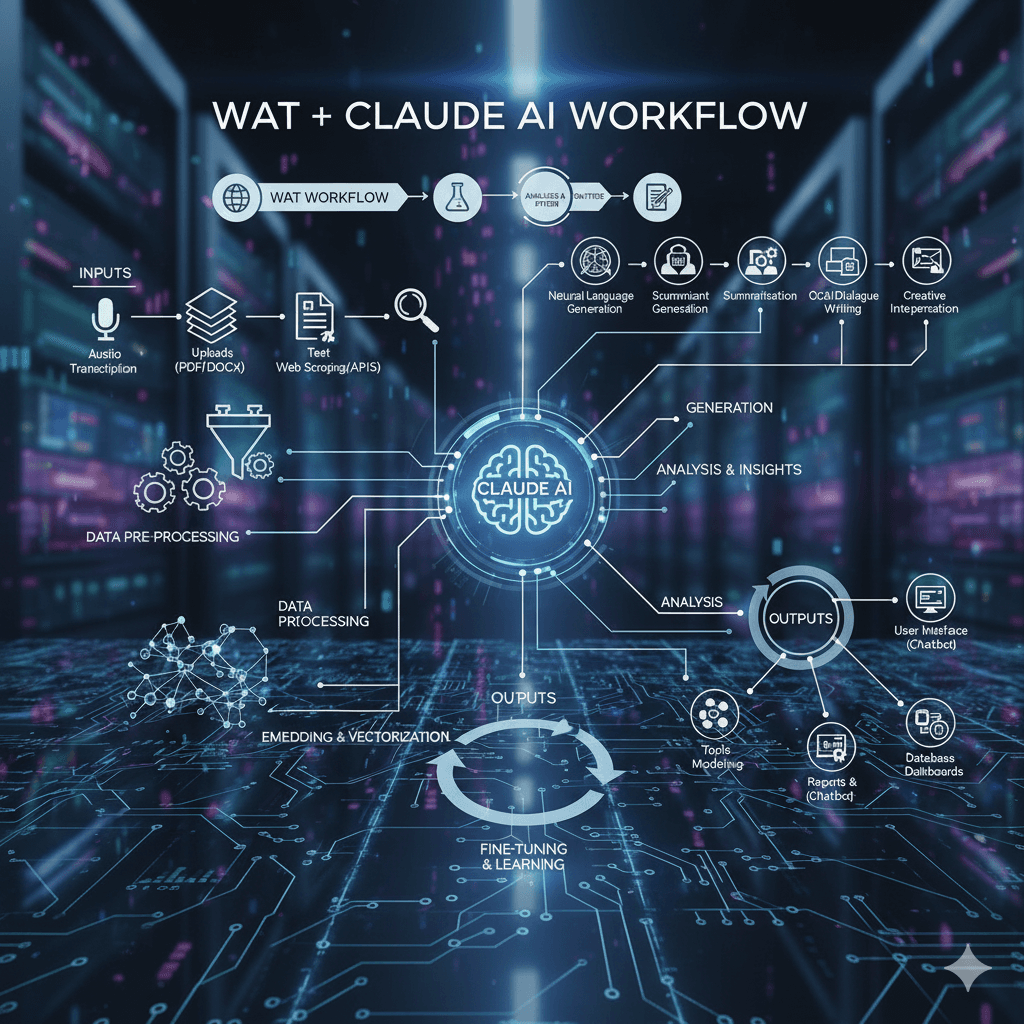

Brewfather is a comprehensive app for homebrewers that manages recipe design, batch tracking, fermentation logs, water chemistry, and ingredient inventory, making it a central hub for homebrew recipes. An MCP (Model Context Protocol) server acts like a plugin that exposes local tools and data to an AI such as Claude, letting it call a locally run server without any cloud intermediary or custom UI. Using Brewfather’s REST API, the author fed the documentation to Claude and quickly generated a local MCP server that connects Brewfather to Claude Code. This setup enables three key capabilities: browsing and inspecting real Brewfather recipes, checking the brew schedule and batch stages, and verifying whether the ingredient inventory is sufficient for an upcoming brew day. The main benefit is turning brewing data into a conversational interface, so users can simply ask questions like whether they are ready to brew on a given day. Future extensions could include logging fermentation readings, auto-creating batches from recipes, and sending notifications as batches progress through stages.